Key Takeaway

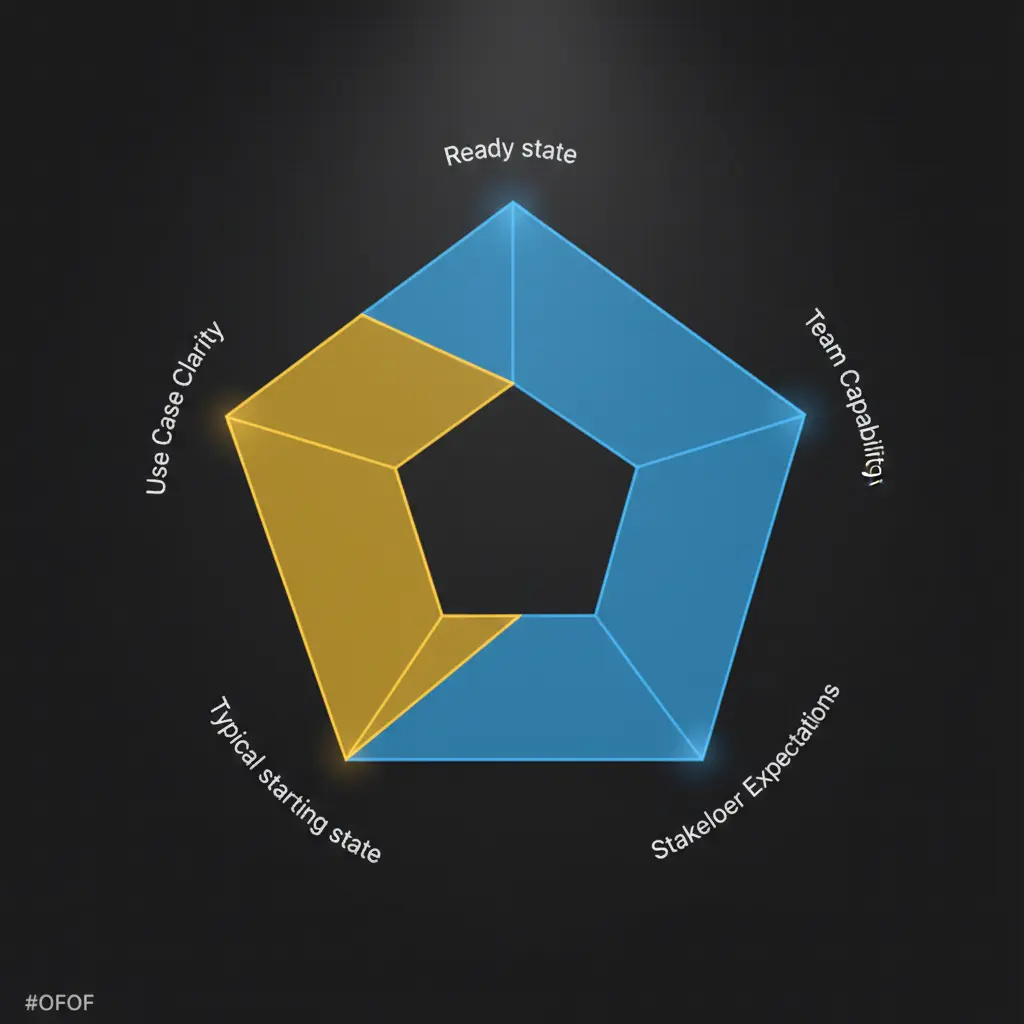

Before committing engineering resources to AI, assess five dimensions: use case clarity, data quality, architecture, team capability, and stakeholder expectations — and close specific gaps rather than waiting for perfect readiness.

The question founders and product leaders actually ask is not “should we add AI?” They’ve already decided yes. The real question is: “Are we ready to do this now, or are we about to spend six months building something that doesn’t work?”

That question deserves a real answer. Most “AI readiness” content does not provide one. It offers a checklist of things you should have — good data, clear goals, executive buy-in — without telling you how to assess whether you actually have them, or what to do when you partially have them.

Readiness is not binary. You don’t have it or lack it. You have a specific readiness profile across five dimensions, and each dimension has different implications for whether you can start now or need to do something specific first.

The five dimensions are: use case clarity, data quality and access, architecture, team capability, and stakeholder expectations. Work through each one honestly. The goal is not a clean score. It is an accurate picture of where you actually are.

Dimension 1: Use Case Clarity

This is where most AI initiatives fail before they start — not in the code, not in the data, but in the planning meeting.

A general AI ambition is not a use case. “Use AI to improve the onboarding experience” is not a use case. “Use an LLM to analyze the onboarding session recording and automatically populate the user’s profile with their stated goals and company context” is a use case.

The distinction matters because vague use cases cannot be built, evaluated, or iterated on. You can’t tell if a vague use case is working.

Signals you’re ready to move:

- You can describe the specific task in one sentence without using the words “improve,” “enhance,” or “optimize.”

- A human somewhere in your product or business currently does the task manually. That’s your baseline for evaluation.

- You can define what a correct output looks like in measurable terms. Not “the output should be helpful” — something like “the output should classify the incoming ticket into one of 12 categories with accuracy above 85% against our labeled test set.”

- You have at least 20 real examples of the task done correctly that you could use to evaluate AI outputs against.

Signals to address first:

- The use case is still at the “improve X with AI” stage after more than one planning conversation.

- Nobody in the room can agree on what “good output” looks like.

- You don’t have examples of the task being done correctly, because the task has never been done before. A genuinely new capability with no human baseline is harder to evaluate and usually should not be the first AI feature you ship.

If your use case is unclear, the right move is a one-week definition sprint before any engineering resources commit. The goal: one candidate use case, described in one sentence, with a definition of correct output and a source of evaluation examples. Pick one and move.

Dimension 2: Data Quality and Access

The most common statement that blocks AI initiatives: “Our data isn’t ready.” It’s sometimes accurate. More often, it’s a proxy for anxiety about commitment, and the specific gap never gets named precisely enough to actually close it.

The question is not whether your data is perfect. It’s whether it’s sufficient for the specific use case you’ve defined, and whether the gaps are real blockers or just friction.

Signals you’re ready to move:

- The data your AI feature needs already exists in a structured, queryable form. Not buried in PDFs or log files that require significant extraction work before they’re usable.

- The data is current. For most product AI use cases, data older than 18 to 24 months produces degraded results unless the domain is very stable — legal text, product specs, historical records in a slow-moving field.

- You know the volume you have and whether it’s sufficient for your approach. Fine-tuning requires thousands of labeled examples. Retrieval-augmented generation (RAG) scales with corpus size. Prompt engineering on a pretrained model works with any volume, since you’re not training anything.

- There’s a clear plan for personally identifiable information. If your data contains user information that can’t be passed to an external model provider, that needs a decision before integration code gets written, not after.

- Someone owns the data. A single person who is accountable for its quality and access. Shared ownership of data produces data problems that surface in production.

Signals to address first:

- The data exists but is poorly structured or requires significant cleaning. That’s a sprint of work, not a blocker. Scope it and schedule it.

- Data ownership is unclear. This is the most commonly skipped organizational decision. Fix it before building.

- PII handling has no defined approach. Your options are anonymization before sending to the model, on-premises inference, or picking a different use case that doesn’t touch sensitive fields. Make the decision now.

Dimension 3: Architecture

AI features can often be added to existing products with modest infrastructure changes. The question is whether your product is structured to accept them without creating a maintenance problem you’ll pay for later.

Signals you’re ready to move:

- Your product has a stable API layer that AI components could call or be called by. A well-defined REST or GraphQL API that isn’t changing frequently underneath you gives AI features a reliable integration point.

- Your infrastructure can handle the latency characteristics of LLM calls. Typical API responses take 1 to 10 seconds. Agentic workflows with multiple tool calls take longer. If your users are in real-time flows that already run close to tolerance, you need a plan for this before you ship.

- You have observability in place — logging, error tracking, alerting — that can be extended to cover AI-specific failure modes. AI failures are different from standard application errors. You need to be able to see when output quality degrades, not just when requests fail.

- You can deploy AI features independently of the rest of the product, with feature flags and a rollback mechanism. Rolling back a bad AI feature at 2am should not require a full deployment.

Signals to address first:

- Each AI integration is going directly into individual components with no shared abstraction layer. This is fine for one feature. It becomes a maintenance problem at three or four features, when you have separate error handling, cost tracking, and prompt management scattered across the codebase. Build a lightweight AI gateway service before the second feature.

- No feedback mechanism exists for users to report bad AI outputs. This is not optional infrastructure. It’s how you catch systematic failures before they compound. A thumbs up / thumbs down on AI outputs takes two days to build and is one of the most valuable things you’ll ship.

- No feature flag system is in place. If you can’t turn a feature off without a deployment, the risk profile of shipping changes significantly.

Dimension 4: Team Capability

Your engineers are excellent at what they do. AI system design is a distinct discipline, and the gap is not a reflection of capability. It’s a reflection of experience.

The failure modes in AI features are unfamiliar to engineers who haven’t shipped one. Non-deterministic outputs, prompt sensitivity, context window management, evaluation design, agentic state recovery — these require patterns that aren’t learned from conventional software development. Teams that underestimate this gap ship features that work in development and degrade unpredictably in production.

Signals you’re ready to move:

- At least one engineer on the team has shipped an LLM integration to production, not just experimented in a development environment. Development doesn’t reveal the failure modes that production reveals.

- Your team has experience evaluating AI output quality. Not just whether code runs, but whether outputs are correct. This is a different skill than debugging a failing unit test.

- Someone on the team understands prompt engineering well enough to iterate when outputs are wrong. Treating the model as a black box produces a feature you can’t improve. Someone needs to know how to diagnose and fix output quality issues through prompt changes.

- For agentic systems specifically: someone on the team has experience with stateful systems and error recovery. This is the most underestimated capability gap. Agentic failures are actions, not words, and recovering from a bad state in a multi-step workflow requires different thinking than recovering from a bad API response.

Signals to address first:

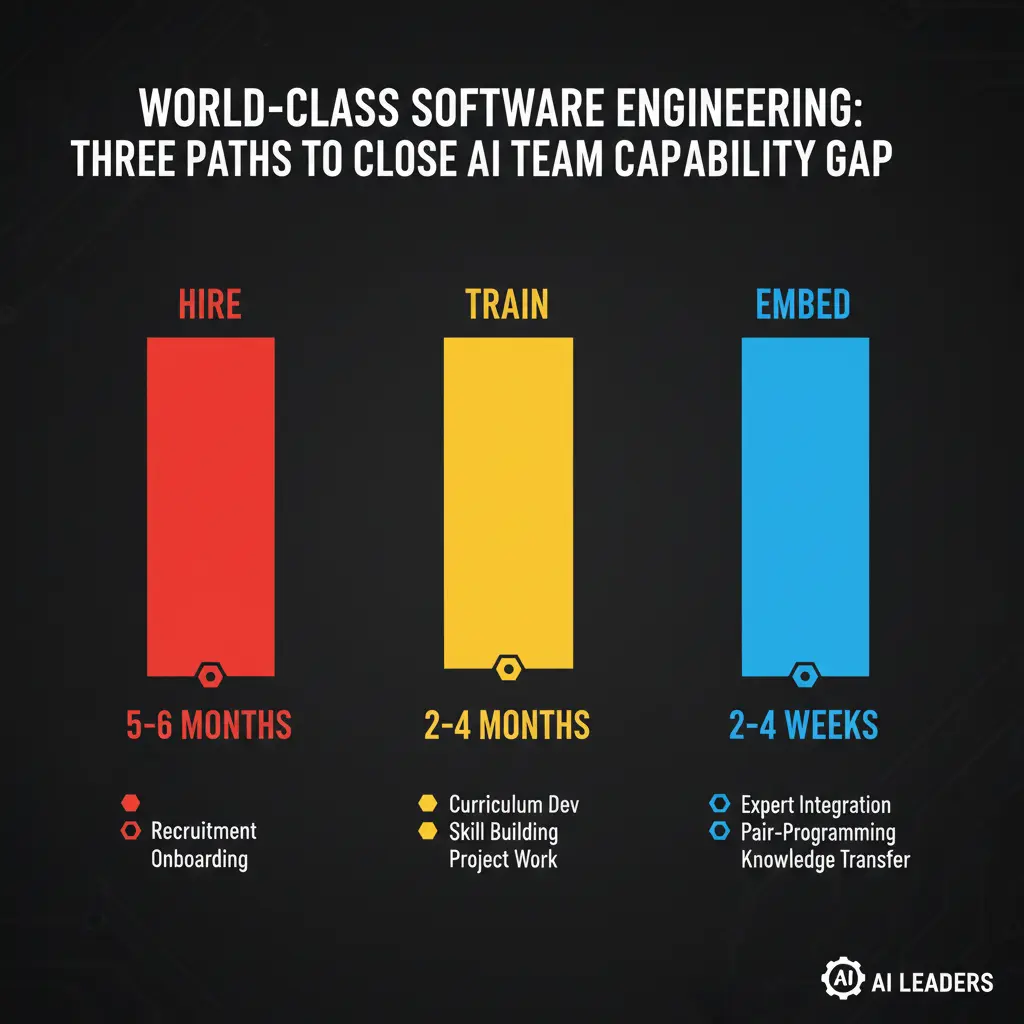

- No one on the team has shipped an AI feature to production. You can close this gap in three ways: hire a senior AI engineer (5 to 6 months to first real contribution), train the existing team (reasonable for generative features, harder for agentic systems), or embed AI expertise directly into the team. That third path closes the gap in 2 to 4 weeks rather than months.

- No named owner for the AI initiative. Someone needs to be accountable for the system’s behavior in production — including when it fails at 2am. If no one owns it, no one fixes it.

Dimension 5: Stakeholder Expectations

The team can be ready to build something excellent while the people around them expect something unrealistic. That mismatch kills initiatives after they ship, not before.

Signals you’re ready to move:

- Leadership understands that AI features require 2 to 3 iteration cycles after initial launch before outputs are reliable enough to trust at scale. The first launch is not the finished product. It’s the start of the evaluation and improvement loop.

- There’s an executive sponsor who controls budget and can defend the initiative when it hits its first significant failure or resource conflict. Every AI initiative hits one. Without a sponsor, the response is to deprioritize.

- You have a specific, measurable definition of success beyond “it ships.” One metric. One target value. “Support ticket routing accuracy above 85% within 60 days of launch” is a success definition. “Improve support operations” is not. The definition of success determines whether iteration has direction.

- You have at least a rough incident plan. What constitutes a serious AI failure — bad output reaching users, error rate above a threshold, model provider outage. Who gets paged. What the response steps are. This takes an afternoon to document.

Signals to address first:

- Leadership expects results immediately after launch. An AI feature that ships with 70% accuracy and improves to 88% over three iteration cycles is a success. If the expectation is 90% at launch, the team will ship something that isn’t ready or get blamed for results they can’t control.

- No sponsor is named. AI initiatives without sponsors have a high attrition rate at the first serious resource conflict. Name one before the first sprint starts.

- Success has no specific definition. This is uncomfortable to define early because it creates accountability. Define it anyway. You need it before the first sprint begins, not after the launch.

What to Do If Most Signals Point to “Not Ready Yet”

A low readiness score is not a signal to pause. It’s a map of what to address before committing engineering resources.

The most common gap patterns and their remedies:

Unclear use case. Run a one-week definition sprint. The output is one use case described in one sentence, with a correctness definition and a source of evaluation examples. Not three candidates with no decision. One.

Data gaps. Name the specific gap — missing labels, PII contamination, no data owner, stale data. Each has a different remedy. “Our data isn’t ready” is not a specific gap. “We have 200 labeled examples and need 1,000” is.

Team capability gap. If you have a clear use case but no one who has shipped an AI feature before, the fastest path to production isn’t hiring. It’s embedding senior AI engineers into your team while your engineers build the capability alongside them.

Expectation misalignment. Have the conversation before the first sprint. Set the timeline, define the success metric, name the sponsor, document the incident response plan. Four hours of alignment conversation prevents months of friction.

Frequently Asked Questions

How do you assess AI readiness for a product?

Evaluate five dimensions: use case clarity (can you describe the task in one sentence?), data quality (does the data exist in structured form?), architecture (can your product accept LLM latency?), team capability (has anyone on the team shipped AI to production?), and stakeholder expectations (is there a sponsor and a measurable success definition?).

What’s the most common reason AI initiatives fail?

Vague use cases. Most teams that are six months in with nothing shipped don’t have a technical problem — they have a definition problem. If no one can agree on what correct output looks like, no engineering work will fix that.

How long does it take to close a team capability gap for AI?

Hiring a senior AI engineer takes 5-6 months. Training an existing team takes 2-4 months for generative features, longer for agentic systems. Embedding senior AI engineers into your team closes the gap in 2-4 weeks.

One Question Before You Build

Before the first sprint begins, answer this: can you describe the specific task your AI feature will perform, in one sentence, with a definition of what correct output looks like?

If yes, you have enough clarity to start. If not, that’s the work to do before anything else.

Most teams that are six months into an AI initiative that hasn’t shipped don’t have a technical problem. They have a definition problem that engineering resources were applied to before the definition existed.

Get the definition right. Everything else is solvable.

If you’ve worked through this assessment and want to talk through your specific situation, our AI Advisory work starts with a 30-minute conversation — no slides, just your scores and the most direct path forward. Or contact us to discuss your readiness profile.